The Problem with Chat

Chat interfaces present a few user experience challenges that will need to be thoughtfully addressed.

Okay, so I’ve been talking up ChatGPT and the like for a bit now. And I do stand by everything that I’ve said and written about it. I think the reason that ChatGPT blew up was because of the chat interface. We all understand chat, which makes it a great interface to a computer. Just say what you want and the computer will figure out what you mean and get you an answer or at least tell you that it doesn’t know. It certainly beats filling out a form with a bunch of inputs, dropdowns, sliders, etc. And that’s before anything goes wrong. If something goes wrong in a form or you don’t get a response you were expecting, there’s no back and forth to figure it out. You’re left on your own to either throw up your arms or frustrated and concerned, having to ping the company’s customer support team to figure out what happened.

I am also a firm believer that we will see chat everywhere in the next 6-12 months. Every software product in one way or another will incorporate a chat component. Most of them already have some sort of chat component in them today, they just happened to be focused mostly on customer support. This will change, however, and over the next couple months, many of the forms you used to fill out will be replaced with a chat-like interface. And it won’t be just forms, there will be lots of other use cases for chat in the products you use today. Products that don’t have a chat interface will feel as antiquated as landline phones feel today.

But the more I play around with chat interfaces and the products that use them, the more I realize it’s not all positive. While it is a vast improvement on the inputs we’re used to filling out online, it does do worse than traditional forms on one key measurement: affordances. Using the definition created by the designer Don Norman in his book The Design of Everyday Things, affordances are the relationship between the properties of an object and the capabilities of the agent that determine just how the object could be used. Another way of saying it is that affordances determine what actions are possible.

While a chat interface does do well in letting you know it expects a text input, it also suffers from the paradox of choice. ChatGPT and the like offer an endless number of possibilities! But they also offer an endless number of possibilities. Where do you even begin?

I actually think this is why people eventually hit roadblocks with chat interfaces like ChatGPT. While it has proven extremely useful for anyone that has a problem that they need solved and knows what the problem is, as Albert Einstein has said, it’s not the solution that’s the hard part, it’s coming up with the question. And herein lies the problem, when we’re just given a blank screen and an empty input, we struggle to figure out what to do1.

If I put my product manager hat on for a second, there’s also something else that we all deal with in designing products. People are kinda lazy. That’s not a bad thing, necessarily (laziness can lead to innovation and creativity)2. But if we’re given the option between otherwise equal offerings, we will 100% of the time select the more convenient of the two. It’s why you won’t download an app for buying something online, when the merchant’s website is perfectly usable. It’s also why if you have downloaded that app, it’s still likely on your phone today, even though you don’t use it.

As chat interfaces become more ubiquitous throughout the various products we use, they will all face this same challenge. The good news is that you can already see some potential solutions or ways to navigate this problem beginning to emerge.

This is why there’s a lot of hype currently around “AutoGPT” and the like. AutoGPT still suffers from the initial prompt issue of endless possibilities, but it at least addresses the “just do it for me” part of our nature. I’ve also been seeing a few other good attempts out there.

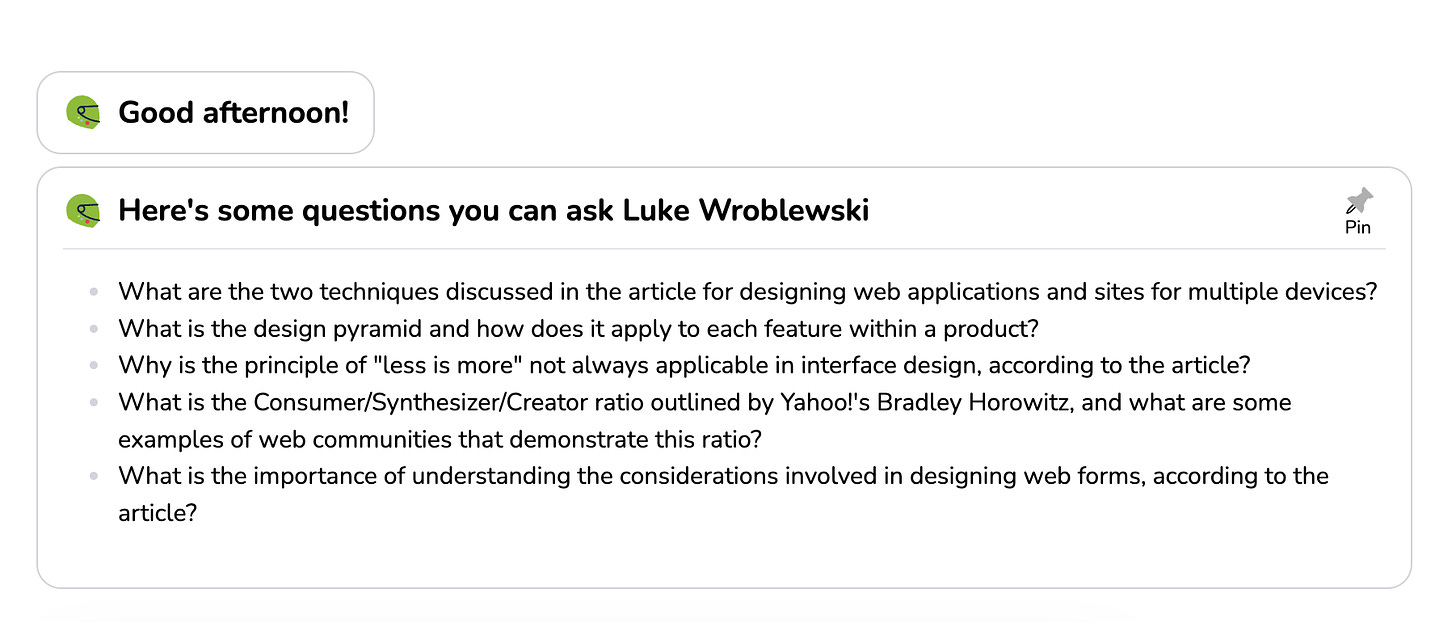

One of those is from Luke Wroblewski (aka LukeW online). Luke is pretty well known in the design and product circles for his work so this isn’t much of a surprise but I wanted to share how he does it. If you go to a particular part of his website, you’ll see that you’re presented with a chat interface.

But here’s where this page is different than something like ChatGPT, on Luke’s site, the conversation is started by the assistant, not by the user. This is a common pattern among support chat products. What I really like about Luke’s approach though is that it’s still open (you can ask anything if you wanted, he doesn’t hide the input like some other products do), but it provides you with some questions you can ask that it’ll be able to handle and give you an answer to. In addition, the site changes up what questions will be presented to you each time you visit it (try refreshing the page), giving you new ways to discover what to do each time. Now this isn’t earth shatteringly new user experience (UX), others are doing it or have done it before, but it is thoughtful and I think points more towards where many products will be going. It’s a combination of guidance and open-endedness.

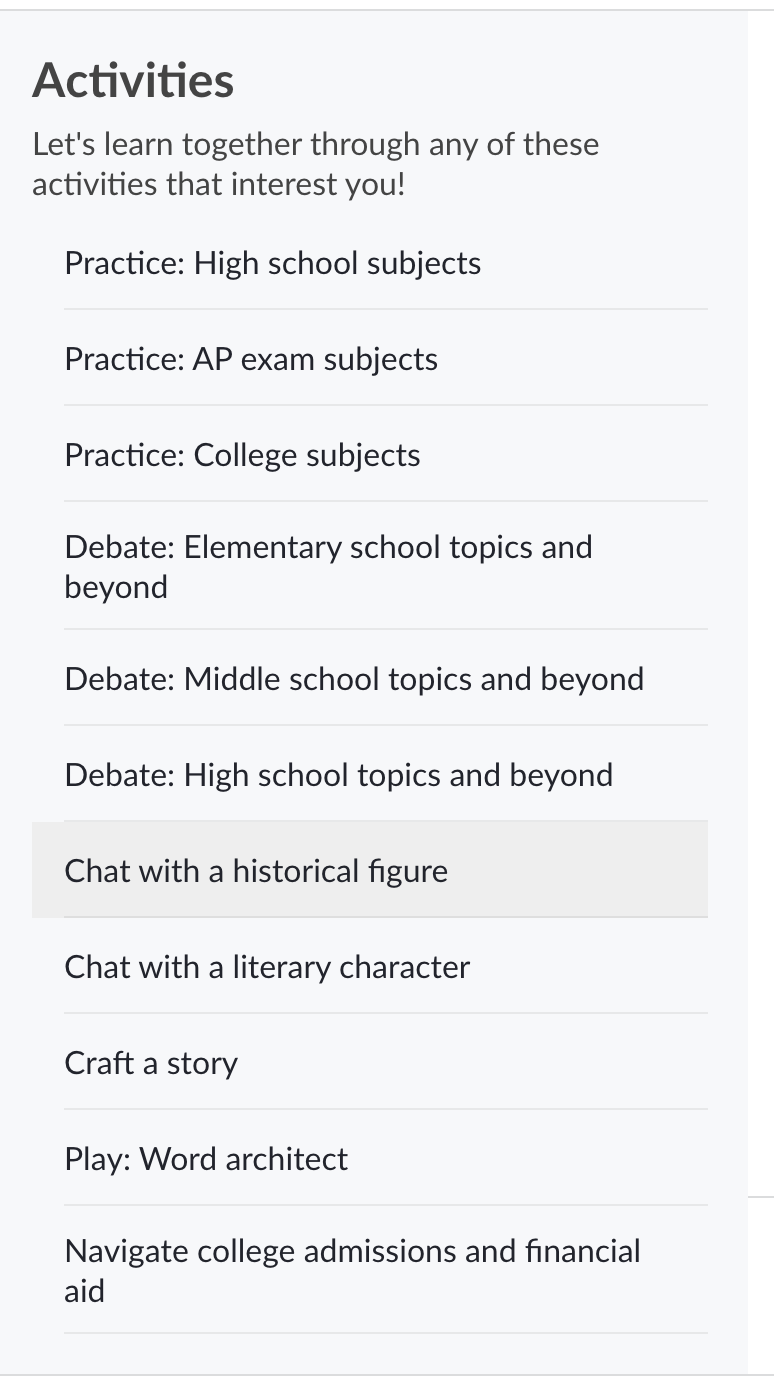

Another example of this worth sharing is Khan Academy’s new Khanmigo product3. It’s brand new, I just got access to it the other day, but I thought they have done a really good job with the UX. Especially since they have more of a focused mission for the product, which is to help people learn. In theory, with an open-ended interface, anyone could type anything they wanted. Which could lead to some not so great experiences, or end up getting you away from what the product’s whole purpose is. They also have a very challenging user base to navigate, children. But I think they’ve done a really good job with it so far. There’s some quirks that I’m sure they’re working on addressing but they seem more technical then UX related.

Like LukeW’s example above, they start the conversation with an assistant’s message, introducing you to the conversation and letting you know how you can get the most from it.

But what really makes this great is the second thing I think they did really well, which is to provide you with a sidebar of “activities” that you could do. Instead of centering around completely open-ended conversations, like ChatGPT, they provide you with a sort of menu of options of experiences or interactions that you can have. This is a great starting point. They still have the open-ended conversation option, but they have a lot of other starting points that you could try, which addresses the “where should I even begin” issue.

What’s great about this approach alongside the assistant starting the conversation, is now for each activity you select, there’s a targeted assistant message, helping to guide your initial conversation. This is awesome because not only does it give you a starting point and help you figure out what you can do, but it also has all the benefits of the chat interface, allowing you to take the conversation in whatever way you want. I particularly like the game activity, which chat seems like such a natural way to play a game. I’d expect many more takes on games that use chat-like interfaces will be coming soon.

I understand that Khan Academy and LukeW’s products are different from ChatGPT. They have different goals, different audiences, and different purposes. But I’ve also begun to see personally how my own usage has bumped up against the paradox of choice. I’ve gotten early access to their plugins, both on the developer side as well as user side, and there’s a lot of cool things that you can do, but I’m also probably doing 1/1000th of the things I could be doing. It’s hard sometimes to know where to even begin. It’s also still true that I have problems today that could be addressed by ChatGPT but I either don’t know it could help or I forget to ask it. Both of these could be addressed with some thoughtful product adjustments.

And all of this is still coming at it from the chat interface perspective. I think there’s a ton of other things we might get out of the large language model AI’s that have nothing to do with chat. A good initial example of another approach is Github’s CoPilot product. It provides suggestions as you work, giving you very targeted suggestions you can choose to use or ignore4.

Because they can work with human language, doesn’t mean they are limited to only working with humans. As I’ve been playing around with various concepts, I’ve begun to experiment with some of these things like having different assistants, tasked with different objectives, working with (or sometimes even against) each other, and having little or no user input. Giving the assistants more context around what the conversation might or could be about and having it start with something even outside the conversation window. Or, providing the agents with some objective and pointing them to other types of inputs5, things like notifications, events, APIs, etc.

There’s a whole other post (or couple) that I could write about some of this stuff but I just wanted to share some thoughts around some of the challenges that I think chat products and interfaces will start to have and potential ways companies and teams might begin to think about overcoming them.

There are a lot more ideas that I have on this concept but would also be curious if you agree, disagree, or have other perspectives? Have you bumped up against the paradox of choice and affordance problem yet? If not, why not?

Until next time, ✌️

The irony of all of this is that predicting what comes next is exactly what these large language models are trying to do. But it’s honestly hard to do. Ask any writer. Writer’s block can be a struggle but it’s also defeated as soon as you put something down, just start writing, anything at all and the rest will come. But I digress.

Don’t take this the wrong way. I’m lazy too. We all are and a lot of what drives us is to make our lives or other’s lives easier, which is a good thing.

For a demo view of it, check out Sal Khan’s TED Talk.

Of course Github is also planning to add a chat component to their CoPilot product but I think having the combination of the two is the magical part. If it was just chat, I think programmers might struggle with it more. The combination of the two is really clever. Plus, from what I can tell, they’ll have things like shared context, so that the chat can be kicked off with the context of what you’re looking at.

Still text-based, but you’d be surprised by the different types of things that can be text or converted to text and these models understand them.